Review Apps

Review Apps are automatically deployed by each pipeline, both in CE and EE.

How does it work?

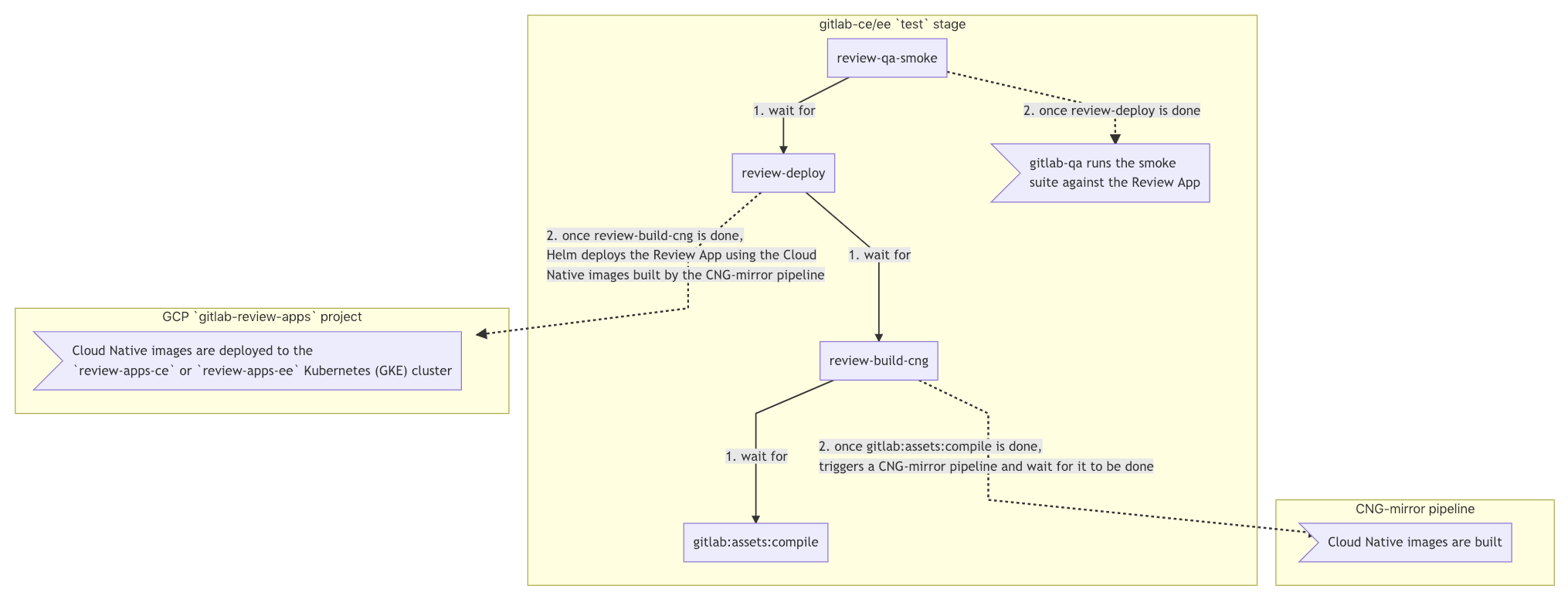

CD/CD architecture diagram

Show mermaid source

graph TD

B1 -.->|2. once gitlab:assets:compile is done,

triggers a CNG-mirror pipeline and wait for it to be done| A2

C1 -.->|2. once review-build-cng is done,

Helm deploys the Review App using the Cloud

Native images built by the CNG-mirror pipeline| A3

subgraph gitlab-ce/ee test stage

A1gitlab:assets:compile

B1review-build-cng -->|1. wait for| A1

C1review-deploy -->|1. wait for| B1

D1[review-qa-smoke] -->|1. wait for| C1

D1[review-qa-smoke] -.->|2. once review-deploy is done| E1>gitlab-qa runs the smoke

suite against the Review App]

end

subgraph CNG-mirror pipeline

A2>Cloud Native images are built];

end

subgraph GCP gitlab-review-apps project

A3>"Cloud Native images are deployed to the

review-apps-ce or review-apps-ee Kubernetes (GKE) cluster"];

end

Detailed explanation

- On every pipeline during the

teststage, thereview-build-cngandreview-deployjobs are automatically started.- The

review-deployjob waits for thereview-build-cngjob to finish. - The

review-build-cngjob waits for thegitlab:assets:compilejob to finish since theCNG-mirrorpipeline triggered in the following step depends on it.

- The

- Once the

gitlab:assets:compilejob is done,review-build-cngtriggers a pipeline in theCNG-mirrorproject.- The

CNG-mirrorpipeline creates the Docker images of each component (e.g.gitlab-rails-ee,gitlab-shell,gitalyetc.) based on the commit from the GitLab pipeline and store them in its registry. - We use the

CNG-mirrorproject so that theCNG, (Cloud Native GitLab), project's registry is not overloaded with a lot of transient Docker images.

- The

- Once the

review-build-cngjob is done, thereview-deployjob deploys the Review App using the official GitLab Helm chart to thereview-apps-ce/review-apps-eeKubernetes cluster on GCP.- The actual scripts used to deploy the Review App can be found at

scripts/review_apps/review-apps.sh. - These scripts are basically

our official Auto DevOps scripts where the

default CNG images are overridden with the images built and stored in the

CNG-mirrorproject's registry. - Since we're using the official GitLab Helm chart, this means you get a dedicated environment for your branch that's very close to what it would look in production.

- The actual scripts used to deploy the Review App can be found at

- Once the

review-deployjob succeeds, you should be able to use your Review App thanks to the direct link to it from the MR widget. To log into the Review App, see "Log into my Review App?" below.

Additional notes:

- The Kubernetes cluster is connected to the

gitlab-{ce,ee}projects using GitLab's Kubernetes integration. This basically allows to have a link to the Review App directly from the merge request widget. - If the Review App deployment fails, you can simply retry it (there's no need

to run the

review-stopjob first). - The manual

review-stopin theteststage can be used to stop a Review App manually, and is also started by GitLab once a branch is deleted. - Review Apps are cleaned up regularly using a pipeline schedule that runs

the

schedule:review-cleanupjob.

QA runs

On every pipeline during the test stage, the

review-qa-smoke job is automatically started: it runs the smoke QA suite.

You can also manually start the review-qa-all: it runs the full QA suite.

Note that both jobs first wait for the review-deploy job to be finished.

Performance Metrics

On every pipeline during the test stage, the

review-performance job is automatically started: this job does basic

browser performance testing using Sitespeed.io Container .

This job waits for the review-deploy job to be finished.

How to?

Log into my Review App?

The default username is root and its password can be found in the 1Password

secure note named gitlab-{ce,ee} Review App's root password.

Enable a feature flag for my Review App?

- Open your Review App and log in as documented above.

- Create a personal access token.

- Enable the feature flag using the Feature flag API.

Find my Review App slug?

- Open the

review-deployjob. - Look for

Checking for previous deployment of review-*. - For instance for

Checking for previous deployment of review-qa-raise-e-12chm0, your Review App slug would bereview-qa-raise-e-12chm0in this case.

Run a Rails console?

-

Filter Workloads by your Review App slug

, e.g.

review-29951-issu-id2qax. - Find and open the

task-runnerDeployment, e.g.review-29951-issu-id2qax-task-runner. - Click on the Pod in the "Managed pods" section, e.g.

review-29951-issu-id2qax-task-runner-d5455cc8-2lsvz. - Click on the

KUBECTLdropdown, thenExec->task-runner. - Replace

-c task-runner -- lswith-- /srv/gitlab/bin/rails cfrom the default command or

- Run

kubectl exec --namespace review-apps-ce -it review-29951-issu-id2qax-task-runner-d5455cc8-2lsvz -- /srv/gitlab/bin/rails cand - Replace

review-apps-cewithreview-apps-eeif the Review App is running EE, and - Replace

review-29951-issu-id2qax-task-runner-d5455cc8-2lsvzwith your Pod's name.

Dig into a Pod's logs?

-

Filter Workloads by your Review App slug

, e.g.

review-1979-1-mul-dnvlhv. - Find and open the

migrationsDeployment, e.g.review-1979-1-mul-dnvlhv-migrations.1. - Click on the Pod in the "Managed pods" section, e.g.

review-1979-1-mul-dnvlhv-migrations.1-nqwtx. - Click on the

Container logslink.

Frequently Asked Questions

Isn't it too much to trigger CNG image builds on every test run? This creates thousands of unused Docker images.

We have to start somewhere and improve later. Also, we're using the CNG-mirror project to store these Docker images so that we can just wipe out the registry at some point, and use a new fresh, empty one.

How big are the Kubernetes clusters (review-apps-ce and review-apps-ee)?

The clusters are currently set up with a single pool of preemptible nodes, with a minimum of 1 node and a maximum of 50 nodes.

What are the machine running on the cluster?

We're currently using

n1-standard-16(16 vCPUs, 60 GB memory) machines.

How do we secure this from abuse? Apps are open to the world so we need to find a way to limit it to only us.

This isn't enabled for forks.